“As the twenty-first century began, human evolution was at a turning point. Natural selection, the process by which the strongest, the smartest, the fastest reproduced in greater numbers than the rest, a process which had once favored the noblest traits of man, now began to favor different traits. Most science fiction of the day predicted a future that was more civilized and more intelligent. But, as time went on, things seemed to be heading in the opposite direction. A dumbing down. How did this happen? Evolution does not necessarily reward intelligence. With no natural predators to thin the herd, it began to simply reward those who reproduced the most, and left the intelligent to become an endangered species.”

— Idiocracy, 2006

“The years passed, mankind became stupider at a frightening rate. Some had high hopes that genetic engineering would correct this trend in evolution, but sadly, the greatest minds and resources were focused on conquering hair loss and prolonging erections.”

— Idiocracy, 2006

“Using AI to skip the slow, sometimes tedious work of learning isn’t the key to developing higher-order skills; it’s the surest way to prevent them from emerging at all.”

— Dr. Jared Cooney Horvath, neuroscientist and author of The Digital Delusion

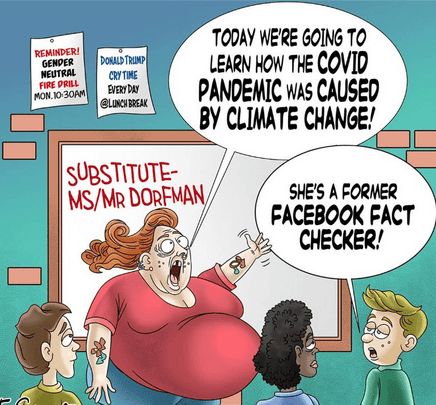

The decline is evident to many of us, manifesting ubiquitously—from a young child, around five years old, having a severe emotional outburst when her mother confiscates her iPad, to adolescents unable to formulate coherent ideas on nearly any subject. Despite this, both technology corporations and educational institutions appear deliberately unaware of this crisis, continuing unabated toward a future marked by diminished intellect. What once merely seemed a satirical film—Idiocracy—now resembles not fiction but a foreboding documentary.

In a recent statement, Dr. Jared Cooney Horvath exposes the core of a growing problem in contemporary education and mental development. He clearly expresses what many observe: a generation facing difficulties in independent thought.

“When examining the evidence, it is apparent that after countries broadly integrate digital technology into schools, academic performance notably declines. Students who engage with computers roughly five hours daily for learning achieve scores that are more than two-thirds of a standard deviation lower than those who seldom or never use technology at school. This trend has been confirmed in studies involving 80 countries.”

The fundamental problem lies not in a deficiency of intelligence but in the absence of basic knowledge. For many years, the prevailing belief has been that in this era of information, facts are readily available at our fingertips, rendering memorization unnecessary. However, research in cognitive science offers a contrasting perspective. Critical thinking is impossible without a foundation of knowledge. One cannot form connections between ideas—a “web of knowledge”—if there are no starting points to build upon.

In the current digital environment, a misleading confidence in mastery has emerged. Young people who grow up using computers and smartphones often confuse quick access to information with genuine comprehension. Their devices serve as external storage for memory and external processors for reasoning. Since they have not been compelled to retain or work through information internally, they lack the mental strength required for profound intellectual engagement.

Requesting them to compose an essay—requiring synthesis, evaluation, and the development of a unique argument—is akin to asking someone to perform complex surgery without the necessary qualifications. This explains why they become overwhelmed when tasked with writing a paragraph about something they have read; their minds, conditioned to delegate the work to devices, simply shut down when independent effort is demanded.

This is not merely theoretical. Take, for example, a direct observation I made recently.

I watched a teenager engaged in an online night school biology assignment. The task was fairly simple: read a biology passage in one browser tab and then answer some questions in another. Straightforward, right?

Yet, what unfolded was quite different.

While “reading,” an entertainment video played in a separate window. His eyes would occasionally shift from the text to the video, where he would laugh intermittently. When it was time to respond to the questions, he swiftly switched back to the biology passage, quickly skimmed—not to comprehend—but to find keyword phrases matching the questions, copied these snippets, and pasted them into the answer fields. This cycle repeated until the section was marked as “complete.”

This entire routine mimicked learning. It was the appearance of education without any real understanding.

Minutes later, I asked him a simple question: “What did you learn just now?” He struggled, attempting to recall a few words he had seen on his screen but failed to form a coherent answer. Eventually, he conceded what was obvious to both of us: he hadn’t learned a thing. Absolutely nothing.

I probed him further, inquiring, “What have you genuinely learned from all your online courses this semester?”

His answer was strikingly honest. He admitted that although he fulfills every requirement—submitting all assignments and progressing as expected—he hasn’t truly acquired any meaningful knowledge. Instead, he has mastered the art of managing digital tasks without genuine engagement or understanding.

What I noticed was not a lack of effort but rather a systemic flaw disguised as advancement.

This student has adapted to an environment that values task completion over deep learning. He treats his mind merely as a conduit, channeling information from the screen, through his hands, only to let it fade away unnoticed. The goal isn’t to comprehend but simply to finish. As a result, critical thinking, curiosity, and retention have become obstacles to bypass rather than abilities to cultivate.

The consequences extend well beyond the educational setting. As Sophie Winkleman emphasized in her 2025 speech at the Alliance for Responsible Citizenship, we are currently facing…“A lost and deeply damaged childhood, with screen addiction displacing nearly every wholesome activity you can think of. As Douglas Gentile puts it, time spent on screens is time not spent elsewhere. A healthy childhood should involve lots of free fun: drawing, running, reading, writing stories, make-believe, kicking a football around, even just staring out of the window and wondering. These are all hushed images in a sepia tint because they scarcely happen anymore.”

The gradual decline of critical thinking unfolds subtly and imperceptibly. It occurs whenever we opt for a quick Google search instead of engaging in thorough reading, whenever mindless TikTok scrolling replaces moments of quiet reflection, and whenever we prioritize the speed of obtaining an answer over truly comprehending it. Dr. Horvath’s research serves as an essential reminder that in an era dominated by artificial intelligence, authentic human intellect requires intentional nurturing, or it risks fading away.

Winkleman advocates for excluding AI from educational environments, urging a return to “analogue learning” methods featuring blackboards and chalk rather than digital smartboards. She likens screens to “neurological junk food” and cautions that excessive digital exposure turns students into passive recipients rather than enthusiastic, active participants in their learning process.[3]

Take a moment to observe your surroundings. A whole generation has emerged whose foremost abilities involve swiping, scanning, and mechanically recalling isolated snippets of information. When asked to clarify how a basic engine operates, detail the sequence of a significant historical event, or outline the fundamental structure of our government, they often respond with blank expressions. They hold information devoid of context and data stripped of significance. Alarmingly, their reliance on immediate retrieval has become so profound that they fail to recognize the gaps in their knowledge—they remain unaware of what they do not know.

The data confirms what our eyes already see. Winkleman notes:

“Health professionals for Safer Screens recently issued guidance that 11- to 17-year-olds should have no more than one to two hours screen time per day. This includes everything: iPads, school laptops, smartphones. It’s all just screen time to the brain. And yet children aged 8 to 18 are, on average, spending seven and a half hours per day on screens outside of school hours.”

At an astonishing pace, we have shaped a generation that increasingly mirrors the dystopian reality depicted in "Idiocracy"—a society unable to engage in critical thinking, solve problems, or understand the essential principles that sustain a complex civilization. The humor of this satire has faded because it has essentially become reality.

The real issue is no longer whether this path leads to collapse, but whether we possess the resolve to change direction before it’s irreversible. Unless we free children from this flawed method—substituting screens with books, passive scrolling with active curiosity, and superficial digital tasks with meaningful challenges—their potential will continue to decline, hastening the disintegration of our modern world. The future remains unwritten. However, if we fail to act, the grim prediction of Idiocracy will define our heritage.